The Role of Invariant Manifolds

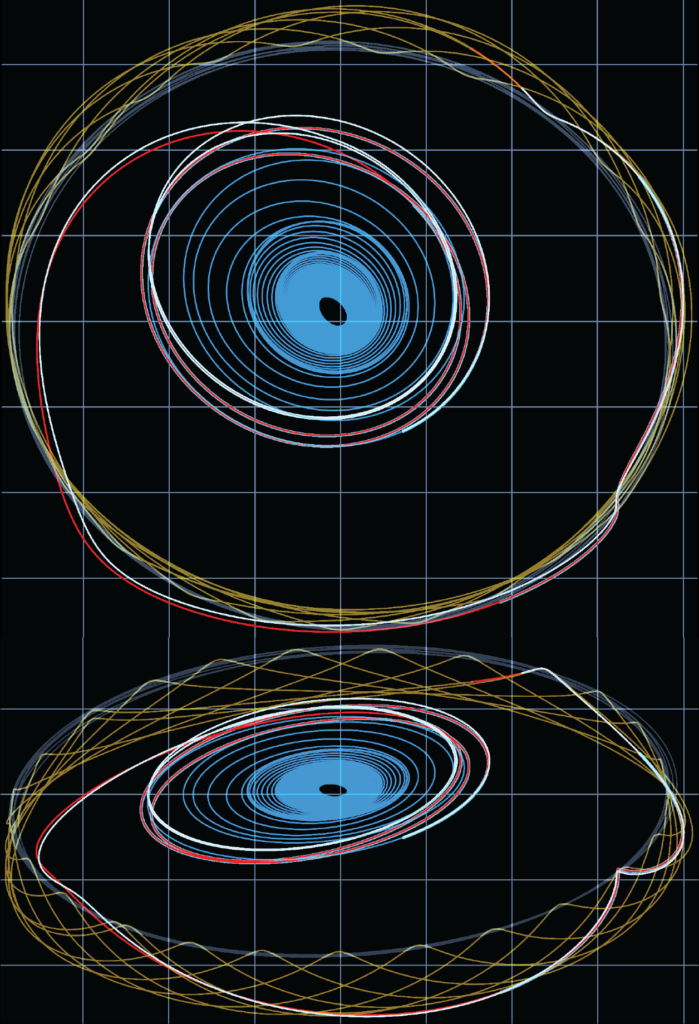

The Circular Restricted Three-Body Problem (CR3BP) provides an approximation of the conservative dynamics that govern the motion of a spacecraft in the Earth-Moon system. It is a gateway model developed by Henri Poincaré at the turn of the century to study the stability of the Solar System and is noticeably different from the simpler relative two-body model that gives way to Keplerian motion as it is a nearly integrable Hamiltonian system that exhibits chaos. Crucially, the model has five relative equilibria, known as the Euler-Lagrange points, and a dense set of periodic orbits. Two of the collinear Euler-Lagrange points are of particular convenience for space missions as they act as minimum energy transit zones between the two gravitational bodies of the CR3BP (e.g., the Earth and Moon) and the exterior realm (e.g., the Solar System). Special periodic orbits that are centered about the collinear equilibria are the Lyapunov and Halo orbits, which have been used or are planned in many space missions; these include space weather observing missions (e.g., at Sun-Earth $L_1$), astronomy missions (e.g., James Webb at Sun-Earth $L_2$), space domain awareness (e.g., the USSF Oracle mission at Earth-Moon $L_1$) and lunar outposts (i.e., the NASA led Gateway at the Earth-Moon $L_2$ 9:2 Near Rectilinear Halo Orbit (NRHO)).

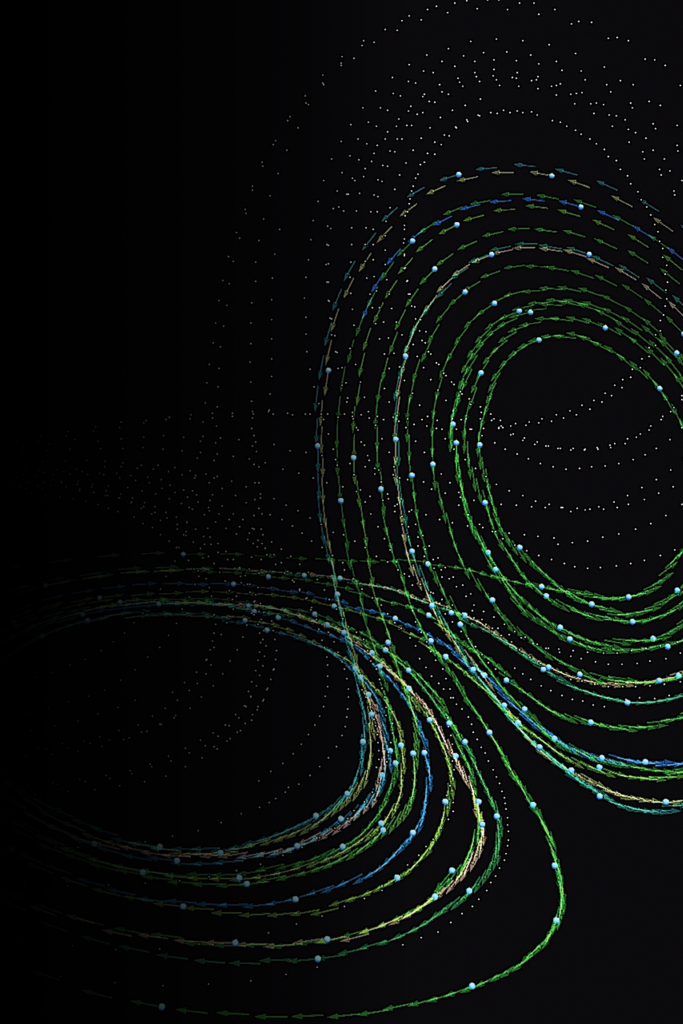

These special orbits and others of the CR3BP that are unstable possess invariant manifolds that constrain and guide the global flow of the system (i.e., separatrix). Hence these orbits and their invariant manifolds, which we define as dynamical structures (DS) for brevity, are therefore valuable as final destinations and efficient transit mechanisms. A systematic approach for designing space missions that make use of these DS is relatively straightforward for the case of an impulsive (chemical) spacecraft. The original techniques of Poincaré are helpful in this case. But modern space flight also consists of more efficient transfer mechanisms enabled by low thrust propulsion. Unfortunately, low thrust solutions bring about their own complications to the mission design problem. In particular, they are characteristically long duration low magnitude continuous forcing on the dynamics, and therefore the direct application of Poincaré’s techniques are no longer as straightforward for mission design. Intuitively, low thrust solutions, and in particular minimum energy optimal control solutions, should shadow DS for efficient transit. This concept of shadowing has indeed been explored numerical, but is largely taken for granted by practitioners of multibody low thrust mission design.

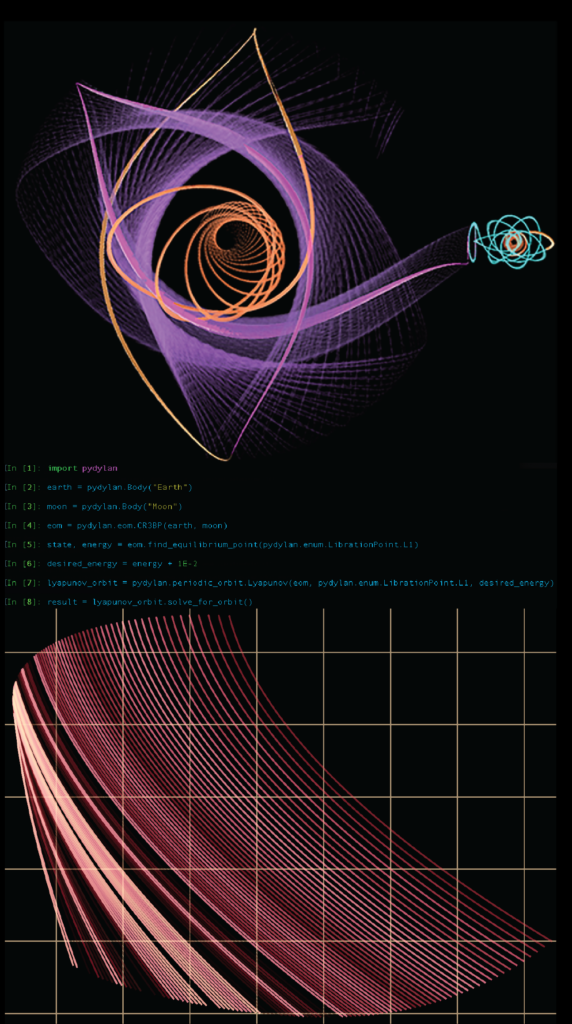

An ongoing theme of research in our group is aimed at making this idea of shadowing precise. One motivation for doing so is that it will enable better automated global search for low thrust optimal trajectories in multibody environments. This is for example, a central aim of our group’s software pydylan. Specifically, we hope to develop a more systematic design approach for low thrust optimal trajectories in multibody environments, similar to the existence of known approaches currently in the impulsive case. To accomplish this objective, our group is taking a two-pronged approach. The first path leverages our existing numerical toolsets, such as pydylan, and high performance computing to produce a statistical study of low thrust solutions and their relation to DS. The second path is an analytic approach whereby we are attempting to revisit simpler lower dimensional problems with known solutions and create small extensions that build in the DS and characteristics of low thrust as seen in realistic space flight problems.

Robust Trajectories

Modeling optimal spacecraft trajectory problems as deterministic is convenient, but in reality there are always a priori unknowns about the system of equations, parameters for the system, initial conditions, and disturbances. To accurately reflect these various sources of uncertainty we should pose our optimal control problem as finding an optimal solution $u^*$ from an admissible set $\mathcal{U}$ that minimizes the following cost function,

$$\psi \circ J(u; \omega) = \mathbb{E}_{\mathbb{Q}_\psi} \left[ \phi(X_1( \omega)) + \int_0^1 \mathcal{L}(s, X_s(\omega), u_s) ds \right],$$

where $\psi$ is a linear functional identified with the probability measure $\mathbb{Q}_\psi$ via the Riesz-Markov-Kakutani theorem and $J(u; \omega)$ is a cost function dependent on the random sample $\omega$. The spacecraft state satisfies the stochastic differential equation,

$$X_t = X_0(\omega) + \int_0^t f(s, \omega, X_s(\omega)) ds + \int_0^t g(s, \omega, X_s(\omega), u_s) d\xi(s, \omega, u_s), \quad \forall t \in [0, 1],$$

where we normalize the time horizon to the unit interval. The optimal solution must also satisfy probabilistic initial and terminal boundary conditions,

$$ \mathbb{P}(X_0(\omega) \in \Xi_0) \geq 1 – \epsilon_{\Xi_0}, \quad \textrm{and} \quad \mathbb{P}(X_1(\omega) \in \Xi_1) \geq 1 – \epsilon_{\Xi_1},$$

and the probabilistic path constraints,

$$\mathbb{P}(\varphi(X_t(\omega)) \leq 0) \geq 1 – \epsilon_\varphi, \quad \forall t \in [0, 1],$$

where $\epsilon_{\Xi_0}, \epsilon_{\Xi_1}$, and $\epsilon_\varphi$ take values in the unit interval $[0, 1]$.

One type of robust trajectory that is of particular interest to our research group is the Missed Thrust Event (MTE) problem. This problem is of particular interest in the case of low thrust spacecraft where engine out events (i.e., the thruster is unable to provide the planned control due to safe events or unexpected outages) are more common than one would first expect. This is partly due to the long durations over which low thrust is applied; sometimes being on the order of weeks or months.

Solving for optimal low thrust trajectories without accounting for MTEs can result in a planned trajectory that is unable to achieve the mission objectives if an MTE is to occur. For example, consider the case of a gravity assist (GA) maneuver and a low thrust burn placed shortly before the GA. If a MTE occurs during the planned burn, the spacecraft could possible collide with the planet being used for the GA or more likely have a close approach with too much altitude to yield a significant momentum exchange. Therefore in the case of MTEs, it is advantageous to solve for a robust optimal solution a priori, which may trade some performance for a reduction in mission sensitivity to this type of event.

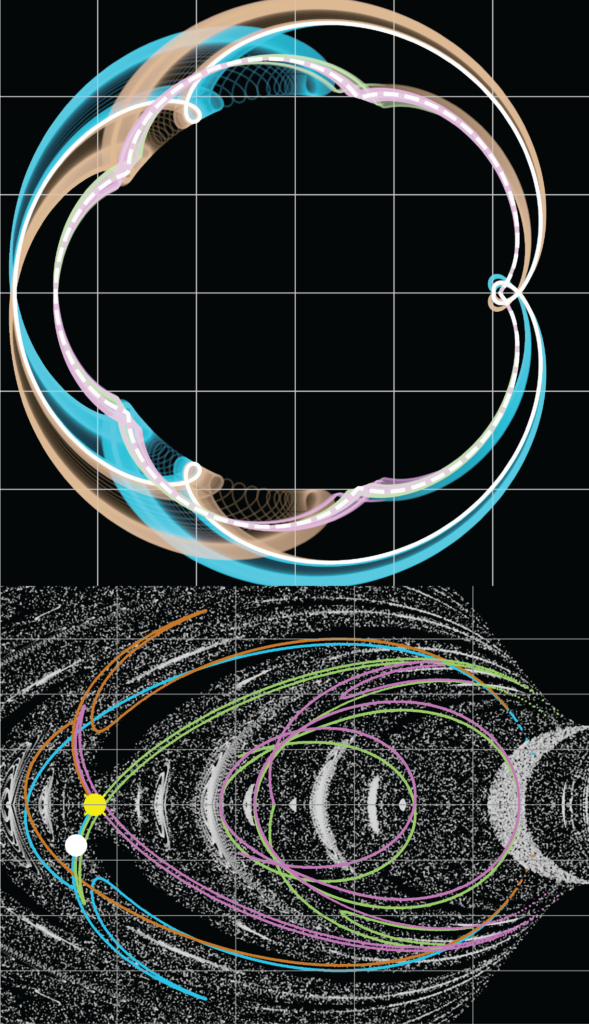

Our research group is pursuing a number of approaches to better understand the behavior of robust optimal low thrust trajectories, how to improve numerical algorithms to solve for such solutions in the face of a curse of dimensionality issue, and how to best formulate these as optimal control problems such that mathematical statements of relevance can be made. With regard to the behavior of robust solutions, we are pursuing questions and approaches raised in our research on the role of invariant manifolds. On the topic of formulating the robust optimal control problem, we are considering the application and extension of the nascent field of bilevel (more generally multilevel) optimal control, which we arrive at by appropriate restrictions of the aforementioned optimal control problem. In particular, the use of stopping times and the modeling of the sample space as the unit circle where the origin represents the resultant reference solution may be helpful in constructing an appropriate theory. From this developing theory and alternative algorithms for optimal control, we have and continue to implement new approaches in pydylan and test them on a range of problems, including stages of the NASA Artemis and Gateway architectures such as the low thrust transfer of the Power and Propulsion Element (PPE) of Gateway as shown in the image to the left.

Data Driven Global Search

& Machine Learning

For an astrodynamicists, the early stages of mission design and formulation (e.g., NASA pre-phase A or phase A) are inherently a global search problem, whereby the astrodynamicists is interested in finding a large collection of high quality feasible solutions that can be traded based on evolving mission objectives and requirements. Elevating the search to that of locally optimal solutions provides one way to measure the quality of a solution.

With the exception of simple models, solutions to optimal spacecraft trajectory problems rely on numerical approaches. The formulation of an approximating numerical problem requires three steps: 1. the formulation of the optimal control problem; 2. the choice of solution via indirect or direct formulation; 3. the application of a control transcription approach to create a parameter optimization problem; and 4. the solution of the parameter optimization problem using mathematical programming solvers. A common and versatile approach is to use a direct approach with a transcription that yields a specific parameter optimization problem called a nonlinear program (NLP), which can be defined as follows,

$$ \begin{align*} &\quad \inf \limits_{u \in \mathcal{U}^n \subseteq \mathbb{R}^n} J^n(u) \\

\text{subject to} &\quad c_i(u) = 0, \ \forall i \in \mathcal{E}, \\

&\quad c_i(u) \leq 0, \ \forall i \in \mathcal{I},\end{align*}$$

where $n \in \mathbb{N}$ is the degrees of freedom, $\mathcal{U}^n$ an admissible control set, $J$ a nonlinear cost function, $c_i$ are nonlinear constraint functions, and $\mathcal{E}, \mathcal{I}$ are the equality and inequality index sets.

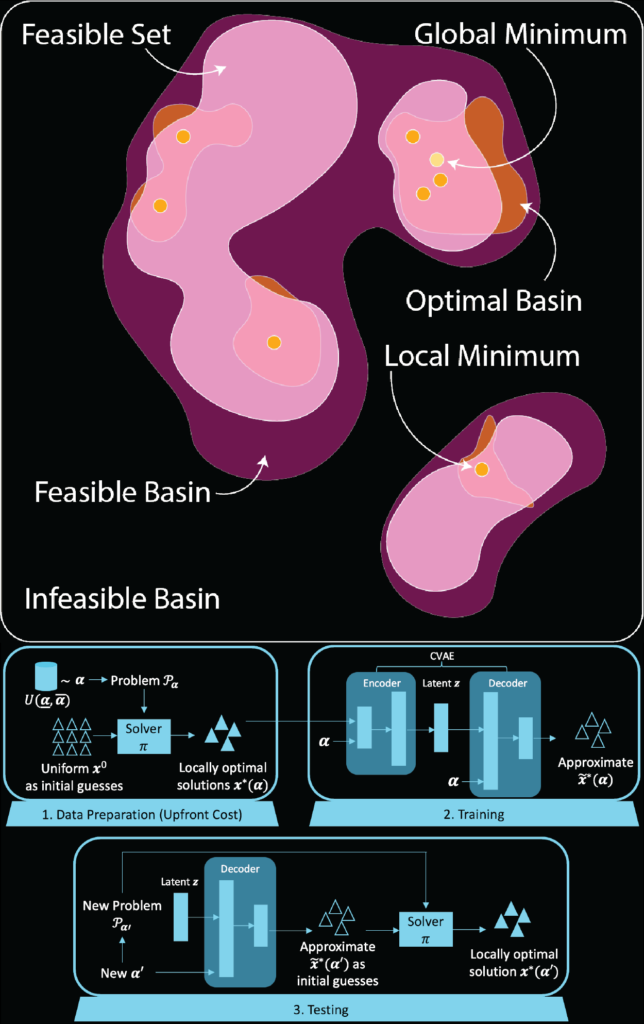

NLP solvers are gradient based and require an initial guess $u_0$. The output of the solver $\pi$ is a sequence of primal and dual states $(u_k, \lambda_k)$ for the NLP. If successful, the sequence will converge to a local optimal solution $(u^*, \lambda^*)$. The goal of a high quality global search algorithm is therefore to provide initial guesses $(u_0, \lambda_0)$ that are near local optimal solutions, so that the solver $\pi$ requires very few iterations to converge.

Our research group focuses on how we can improve the state-of-the-art for global search algorithms to nonlinear optimal control problems, with motivation coming from space flight problems, and in particular low thrust trajectories. The state-of-the-art in astrodynamics is a two-level algorithm known as Monotonic Basin Hopping (MBH), which was originally introduced for solution of protein folding problems and was introduced by Dario Izzo of the European Space Agency (ESA) for trajectory design and further explored by Jacob Englander in his interplanetary mission design tool Evolutionary Mission Trajectory Generator (EMTG). MBH is also currently used in pydylan. MBH can be summarized as sampling from fixed a prior probability distributions, with one representing the global search and the other a local search. For example, in the simplest case, one might choose a uniform distribution over the entire domain for one (the outer level) and a uniform distribution with support on a small open neighborhood (i.e., a ball) for the local search (the inner level). Heuristics are then used to switch between samples from each of the distributions, with the inner distribution translating throughout the domain. A central deficiency of MBH and other global search algorithms is that they do not learn from either offline or online data and therefore a user must hand-tune the algorithms based on experience.

In the Beeson Group we are researching approaches that leverage both offline data (e.g., data generated from prior global optimization problems that are similar to a new one) and online data (e.g., data generated during a global search). In particular, we consider partitions of the primal and dual domain into sets that reflect various neighborhoods of convergence for the solver $\pi$ to a feasible solution, locally optimal solutions, or globally optimal solutions. Our aim is then to approximate a high dimensional distribution with support on the useful subsets (e.g., basins of attraction, as shown in the side figure). We then use a combination of cluster techniques, generative modeling and learning on sequential data to identify important structure in the problem domain and generate novel initial guesses for potential new variations of the original NLP. Although motivated by problems in space flight, the methodology being applied by the Beeson Group in this research area has potential for impact to both global optimal control searches and online re-optimization of control solutions for broader problems.

Cislunar Space Policy

In recent years, there has been a concerted effort across space agencies to encourage the mitigation of debris generation as part of mission and spacecraft design processes. The introduction of the Orbital Debris Mitigation Standard Practices (ODMSP) in 2019 was a key step towards instituting a standard for mitigating debris risks for forthcoming space missions. However, the rapid increase in public and private space activity over recent decades far outpaces the adoption and adherence to such policy guidelines, as evidenced by the NASA Office of the Inspector General (OIG) report which determined that purely mitigative strategies would no longer be sufficient to ensure sustainability of key low-Earth orbits (LEO).

As humanity aims to build a sustained presence at the Moon and foster human exploration of the Solar System, lunar and cislunar orbits will see a similar increase in space activity. Ensuring the safe and sustainable growth of missions in the cislunar domain thus necessitates meaningful measures towards mitigating the risk of orbital debris generation. The planned expansion to cislunar space, however, presents us with the opportunity to establish preventative practices towards minimizing orbital debris generation at the outset instead of adapting in a reactive manner as with LEO. Establishing a set of comprehensive policy directives to guide the development of operational procedures for missions to mitigate the risk of orbital debris generation becomes especially incumbent on policy makers as we consider the immediate future of the Artemis program. A key component of the program that will be launched over the next few years is the Lunar Gateway, which will serve as a multi-purpose outpost orbiting the Moon that provides a habitat for astronauts, as well as a rendezvous point for spacecraft going to and from the lunar surface, and possibly acting as key infrastructure for human missions to Mars and beyond.

Our research aims to further technical understanding of debris generation, propagation, and behavior in cislunar space, as well as analyze the coverage of existing policy infrastructure to address the safety of cislunar missions with regards to debris risks. With our research, we aim to generate a set of policy directives, informed by the technical studies of debris dynamics in cislunar orbits, to guide the development of forthcoming cislunar missions. Such directives, coupled with recommended methods for their rapid adoption, will make a meaningful impact to the Artemis program as well as missions to the cislunar domain in the longer term.

Data Assimilation

Data Assimilation (DA) consists of solving for the “best” estimate of a system given noisy partial observations. To be precise, we call the system that we would like to estimate the signal process $(X_t)_{t \in \mathbb{T}}$ and the measurements of the signal the observation process $(Y_t)_{t \in \mathbb{H}}$, where $\mathbb{T}, \mathbb{H}$ are index sets representing time. We assume that the measurements are corrupted by noise and typically we assume the same for the signal process. The solution of this mathematical problem is a conditional probability measure $\pi$. DA is further classified into three types of problems: smoothing, filtering, and prediction. If $\varphi$ is a measurable function of the measurable space that $X_t$ takes values in, then the filter (i.e., filtering solution) is given by,

$$ \pi_t(\varphi) = \mathbb{E} \left[ \varphi(X_t) \middle| \mathcal{F}^Y_t \right],$$

where $\mathcal{F}^Y_t$ is the filtration of the observation process up to and including time $t$. In the case of smoothing, the measure $\pi$ is defined on a product space representing the likely past given past and current observations. In the case of prediction, the measure is again defined on a product space, but this time representing the future path given the past and current observations.

Generically, the solution to the DA problem does not usually have a closed solution. The best known case of a closed solution for smoothing and filtering is when both the signal and observation processes are linear in the state and have additive Gaussian noise in the discrete-time case or driven by a Brownian motion in the continuous-time case. The solution in these cases are named after Kalman and Kalman-Bucy.

One approach to solving the general nonlinear non-Gaussian filter problem is by using ensemble or particle methods. The standard particle filtering (PF) method in the discrete-time observation case makes use of Bayes’ formula to update the weights of the particles when new observations are available. Although more versatile than other approaches based on linearity, linearization, or Gaussian approximation assumptions, particle filtering algorithms suffer from what is known as particle degeneracy (or collapse, or impoverishment). The central issue is that with increasing dimensionality, the probability that a particle provides an observation near that of the true measurement is increasingly low, and hence very few particles will eventually possess all the cumulative weight of the ensemble. This essentially renders the probability $\pi$ to be approximated by a Dirac measure $\delta$.

High Dimensional Chaotic or Turbulent Systems

Particle degeneracy is made worse for dynamical systems that are chaotic or turbulent. Unfortunately, problems in the geosciences (e.g., atmospheric, oceanic, or climate) and space weather (e.g., solar wind, coronal mass ejection, thermosphere-ionosphere, and space climate) have these properties. Additionally, these problems are high dimensional and sparse in observations (especially in the space weather case, where even the dynamical modeling can be greatly improved in the future). Improving our ability to estimate and predict the states of these systems is imperative to avoiding devastation to vast human infrastructure and their indirect impacts on civilization.

Our research group works on both theory and algorithm development that we hope will enable efficient, realistic, and robust representation of the DA solution in the high dimensional chaotic or turbulent case with sparse observations. In particular, we make use of both optimal control and optimization approaches to attempt to mitigate particle degeneracy. We are also exploring how our control based methods might be used in a hybrid framework with other control based approaches in the literature, such as flow based and mean-field approaches. These alternative control approaches have the valuable property that the weights of the particles never deviate from the evenly weighted case (hence avoiding degeneracy), but haven’t yet been successfully applied to high dimensional chaotic systems in an efficient manner.

Separately from control based approaches, our group also develops theory using multiple timescale properties and averaging theory to yield approximation results for a lower dimensional DA solution. Reducing the dimensionality therefore allows us to partially stall degeneracy. Similarly, our group has also developed early techniques for dimensional reduction in PF algorithms by extracting important subspace information from the dynamical flow, which is relevant for dynamical systems that are chaotic.

Space Situation & Domain Awareness

At the core of both Space Situational Awareness (SSA) and Space Domain Awareness (SDA) is a DA problem. In particular, space operators need to model the uncertainty in the state of their spacecraft (e.g., position and velocity) as well as external objects, so that they can make command and control decisions (e.g., to maneuver their spacecraft to avoid orbital debris or detect the likelihood that another spacecraft has maneuvered). Methods for the solution of accurate and efficient Uncertainty Realism, Propagation, and Estimation (URP&E) has thus far been largely focused on the near-Earth case, but the emergence of greater space infrastructure in the cislunar domain (e.g., the Artemis missions and numerous nations and commercial entities sending spacecraft to the Moon) will require the development of new approaches. In particular, the cislunar domain requires a more complex dynamical model given by at least the CR3BP as mentioned in the role of invariant manifolds. Observational methods are also confronted with greater obstacles including phase angles with the Moon and Sun, as well as greater observational distances that greatly disadvantage active measurements. Lastly, the reliance on a centralized processing approach becomes less desirable in the cislunar domain, which would require greater communication requirements. Therefore efficient onboard URP&E is desirable to achieve a decentralized and autonomous approach.

Our research group is leveraging our work related to the dynamical systems concepts mentioned in the role of invariant manifolds to develop methods that are naturally adapted to multibody environments such as the cislunar domain. By developing new techniques that explicitly make use of our knowledge of dynamical structures, we can reduce the dimensionality of our approximating techniques for the DA solution $\pi$ and better anticipate the future evolution of the filtering solution (i.e., improved prediction).

pydylan

pydylan is a Python interface to the C++ implementation of the Dynamically Leveraged Automated (N) Multibody Trajectory Optimization Solver, also known as DyLAN that has been developed under the leadership of Prof. Beeson. Equivalently, pydylan is a Python package. It enables astrodynamicist and researchers to easily generate global impulsive and low-thrust optimal spacecraft trajectory problems in multibody systems and solve them numerically and with automated search capabilities. pydylan also enables the creation of pertinent dynamical structures for three-body problems (e.g., Lyapunov, Halo, and Resonant Orbits). Trajectory solutions can be solved under simple two-body dynamics, three-body models, or n-body models driven by NASA NAIF SPICE. Solutions with the n-body model requires access to ephemeris data that can be read by SPICE. n-body solutions can be exported into SPICE compatible data sets, that can then be imported to other tools for visualization or further analysis (e.g., Cosmographia). To solve optimal trajectories, pydylan requires access to a user installed copy of SNOPT 7.6 or 7.7.

In the near future, you’ll be able to find documentation and example scripts for pydylan at the following site: https://pydylan.github.io. Beta versions of the tool for educational, academic research, and commercial use will be made available as well. Information on requesting access to pydylan will be posted to the aforementioned site at an appropriate time.

The development of DyLAN was funded under a NASA Phase I SBIR (Contract #80NSSC18P1997) and Phase II SBIR (Contract #80NSSC19C0140) to CU Aerospace L.L.C. with subaward to Princeton University.

Research Funding

We greatly appreciate funding from a number of sources for our research.